Death and Taxes

HEY, BOSSES! Let Us Work from Home!

Pride Month Held Some Valuable Lessons for Country Music’s LGBTQ+ Community

National Gun Violence Day Honors Victims and Survivors, But It’s Not Enough

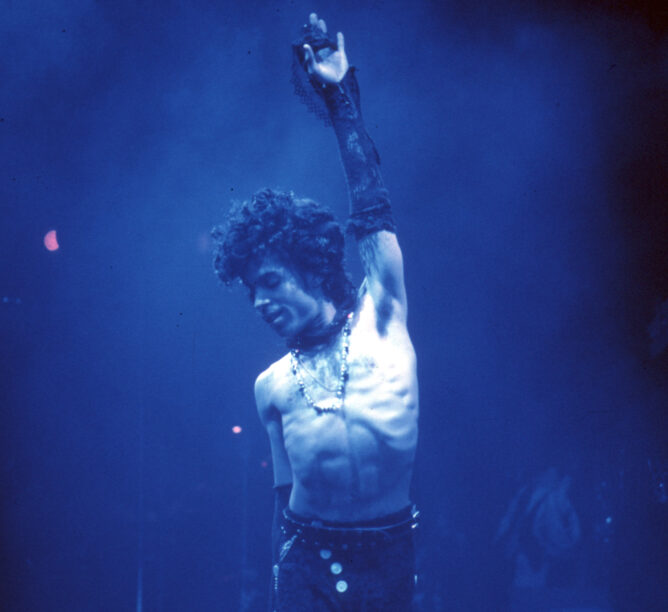

Trump’s Impeachment Defense: What About Madonna?

Up in Smoke

Make It Sing

National Treasure

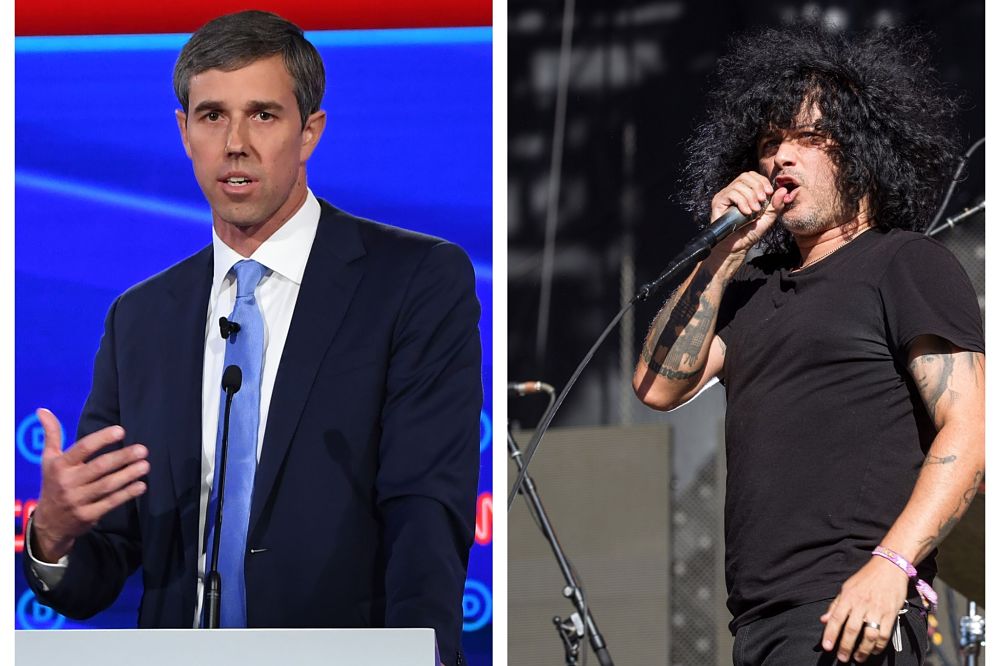

Third Eye Blind’s Stephan Jenkins on His Debt to Black Culture

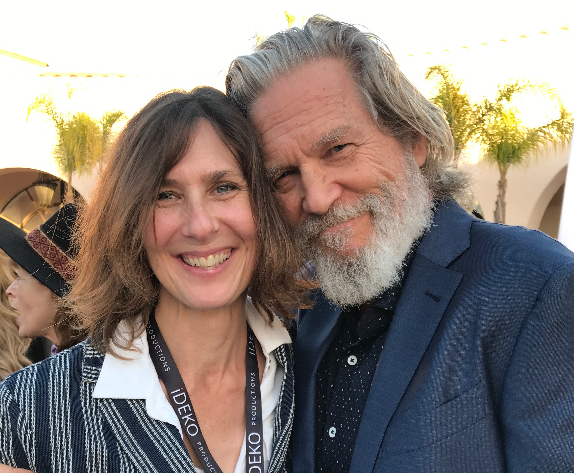

Emergence Is Interactive: A Jeff Bridges and Susan Kucera Essay on Climate Change

Don’t Tread on Me! Navigating Among the Politically Unmasked

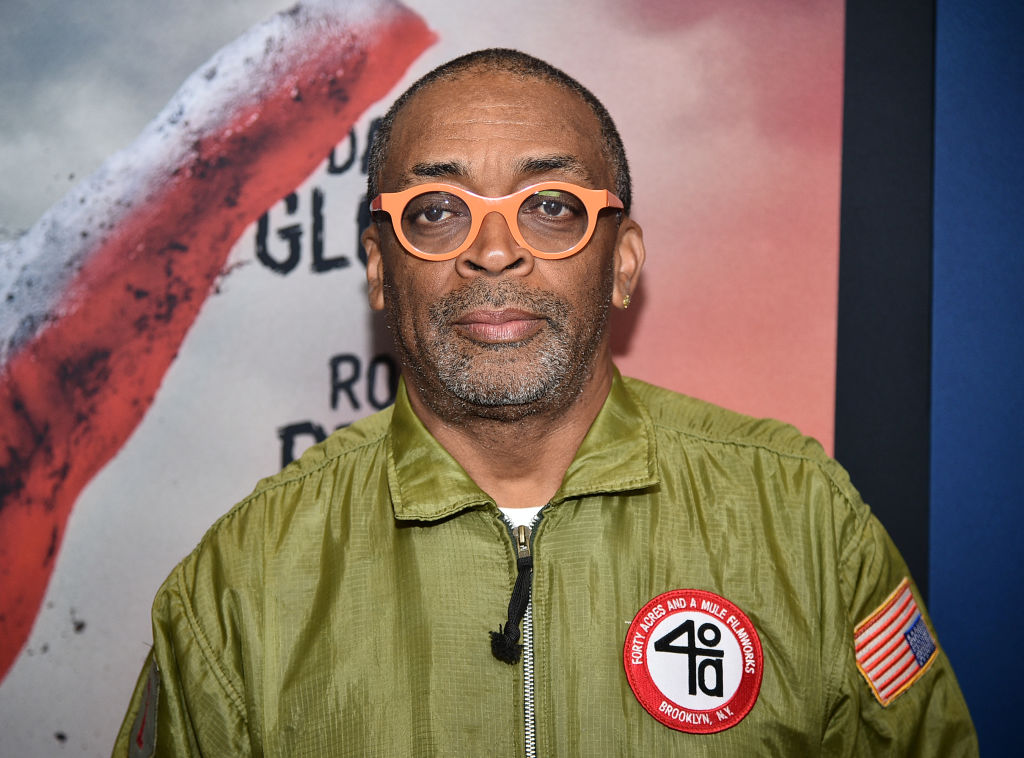

Spike Lee Shares 3 Brothers Short Film With George Floyd, Eric Garner Footage

Stephan Jenkins on Third Eye Blind’s New EP and Why He’s So Pissed Off at the Government’s Response to COVID-19

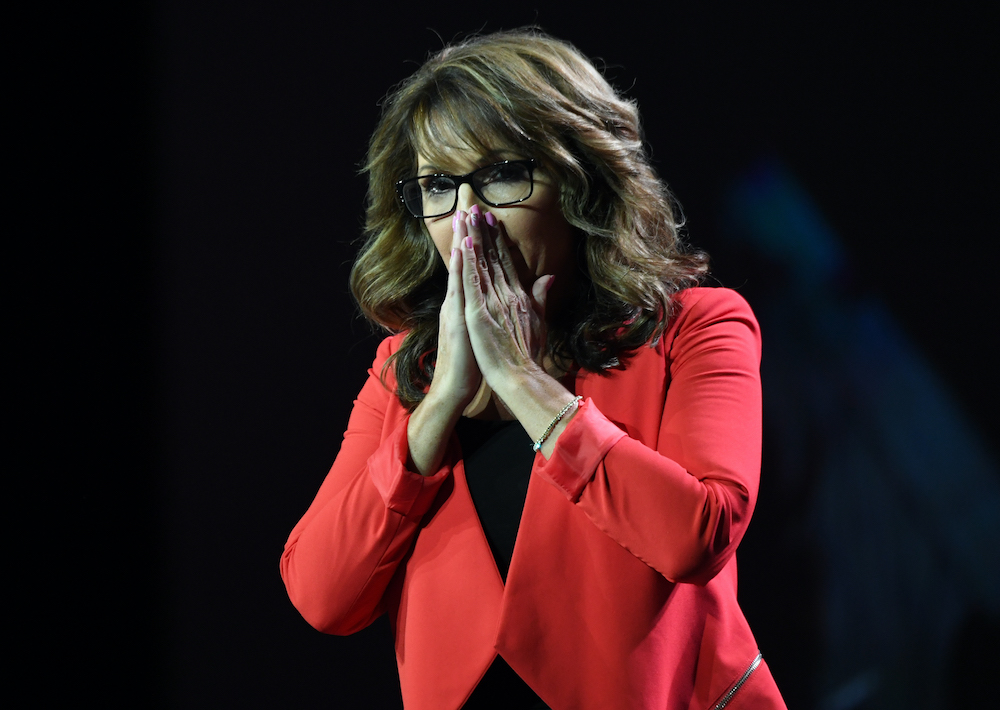

Sarah Palin Raps ‘Baby Got Back’ on The Masked Singer